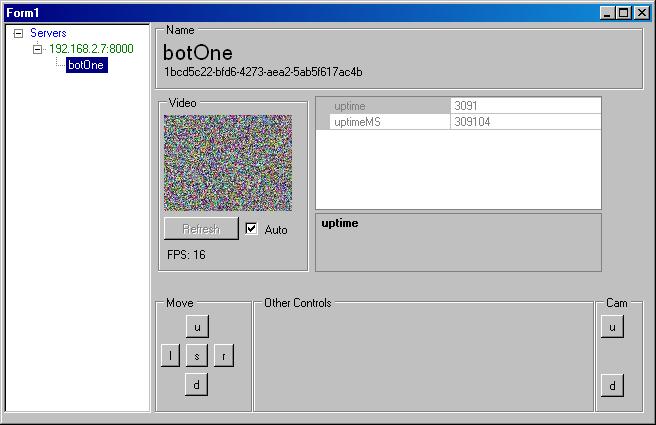

At the point where I have video working and migrated everything to the F4 where all I had left to do was move it to the android phone (and test) to achieve my milestone I got seriously sidetracked by ROS.

I seem to run into this stumbling block again and again, I get so far along in the project that I already have the next generation in mind and its so much better (in my mind) that I’m prone to throwing away large portions of previous design and coding to move to the “next thing” without achieving the almost completed product or milestone. I am going to stop doing that. I see now that by using ROS and open libraries out there my robot could go much futher than I alone could ever take it; however, I only learned that lesson by going this far down the road I took. I’m sure all of the lessons were not learned yet, so with such a small amount of development left to meet my modest milestone I’m going to revert back to my old code and meet my milestone before going off on any tangents.

Tangents

I can rest a little knowing that all of the ideas I had will be written down and I can pickup where I left off.

Basically everything I have done up until now has already been done and much better in ROS by the amazing people at willow garage. You could sum up my most intricate design into a high power micro pumping video out on rosserial to rosjava_core and viewing this in rviz. Then if you gave me 20 years maybe I could have written all of that code. There is no “game” in ROS, it is real robotics but we can create a subscriber or service that does what we want.

So to sum up how I plan to move forward with ROS after meeting my last milestone is this list.

- Investigate existing hardware that works with rosserial and could use a camera. Looks like ros works on embedded linux or arduino (but not all arduinos yet). Maybe the rasberry Pi is the best option, since it already could do wifi and embedded linux and would have drivers for the camera

- Embed ROS or Rosserial into the device, depending on which one you choose. If we go the Pi route we can just run ROS or ROSjava right on the Pi itself. Then we basically have a full robotics platform for $35 plus sd card and wifi, we would want to make it headless eventually.

- Get the device working with a camera, then publish a camera node in ROS

- Setup motors and subscribe to a joystick publisher. Joystick to motors.

- Create clients to view/subscribe to the camera node and publish the joystick node. Maybe we could use rosjava or some other software available and simply point it at the rosmaster? Either way we would want the already predefined and usable ROS format, and we can use the currently available ros tools to test and debug all kinds of stuff.

- Create easy to use API for beginners so they can easily use our robot and develop their own applications. I know ROS has a learning curve, maybe we could wrap it and make it simpler.

So, I’m going back to meeting my milestone even if it sets me back from ros for a few more weeks. I want to make sure I’ve learned all the lessons of my current path and not just 85% of them. But then I’m going full speed with ROS and more than likely the rasberry pi, which is where I should have started to begin with imho.

Ros/Pi Cost:

Rasberry Pi $35

Wifi WL-700N-RXS $11 (omg you kidding me? 150Mbps!)

Camera board $25 (rPi official camera, expected to be released soon)

Body $30?

All of that adds up to $101, my goal was always below $100 and that is really close. With the Pi there is the possibility of having multiple cameras and there should be a huge reduction in the amount of development. The bandwidth is almost 200x, the speed is about 10x but the price is only about 2x (for the main board vs stm32 f4).

Granted if I did spend years developing the stm32f103 into something usable it could possibly cost less than $20 for the whole board but in my opinion thats not worth it.

And if I ever want to I can always go back to the Milestone (video and control through phone) and pick up where I left off.

http://wiki.jigsawrenaissance.org/ROS_on_RaspberryPi

http://www.raspberrypi.org/archives/tag/camera-board

http://pingbin.com/2012/12/setup-wifi-raspberry-pi/

http://www.ros.org/wiki/rosserial