I will try and explain all of the steps that I went through to do this. Since I’ve already done it I might miss a few. Feel free to add comments and I’ll respond and or update the post. I’m not going to rewrite all of the instructions in other pages I will just link to them.

Setup Your Raspberry Pi

OS

Assuming you have some debian based version of linux running on your raspberry pi. I had a version of the os compiled with ROS included because I plan on using ROS asap. I got my version from here.

http://www.instructables.com/id/Raspberry-Pi-and-ROS-Robotic-Operating-System/

Direct Download Link of Image (Raspian)

Connection

Please use a ssh client and ethernet to setup the initial connection, or if you already have wifi setup all the better. I’ll assume your talking to your device through ethernet for now.

WebCam

Plug in your webcam to your usb port. Then run command “lsusb” hopefully you see a new device there, if not you need to search the net on getting your camera to work. Most of the information related to ubuntu or debian is still relevant and I find myself finding lots of solutions in posts that are not Pi specific.

If you do have a new device look at the ID, check the list of supported/verified devices here http://elinux.org/RPi_VerifiedPeripherals if its not there you might be out of luck but try anyway.

The application I used was mjpg_streamer. Instruction on how to install it and make it start up on boot can be found here.

http://www.phillips321.co.uk/2012/11/05/raspberrypi-webcam-mjpg-stream-cctv/

Once you have installed that software I did not use the commands in that post. I reduce the framerate and use raw yuv. If you have a camera that supports mjpg you might save some cpu cycles by using it. But seeking real time this is what I did.

mjpg_streamer -i "/usr/lib/input_uvc.so -d /dev/video0 -y -q 40 -r 160x120 -f 10" -o "/usr/lib/output_http.so -p 8081 -c un:pw -w /home/pi/mjpg-streamer/mjpg-streamer/www/" -b

The last line with the www threw me for a loop. It is the location where you have your html pages, there are a whole bunch of really good demo pages that come with the package so if you dont delete them I would use them and test at this point to be sure everything works.

If you want it to start on startup it is also in the instructions I linked to above.

At this point you should be able to stream your camera over ethernet. Test different frame rates and see the frame count on the demo javascript page.

I removed the cable from my webcam and soldered the usb connector directly to the board. I might not do that next time, but a 5ft cable was really getting in the way. A 6 inch cable would be nice. I took out the Mic also, it was simply glued to the inside of the webcam.

Wifi

Plug in your wifi dongle. Mine was kinda fat and had this huge plastic enclosure so I stripped that off, this allowed me to plug two devices into my model b. Again use lsusb and see if you have a new device, if you do try ifconfig and see if there is a wlan0 device. At this point I set my device to automatically connect to my wireless router using a static ip address and wpa. I’m going to try and set it up as an access point which will be easy on my phone, but a pain for my wired computers. Anyway this is what my entry looks like in /etc/network/interfaces add a similar entry for connecting to your router.

auto wlan0 allow-hotplug wlan0 iface wlan0 inet static address 192.168.2.86 netmask 255.255.255.0 broadcast 192.168.2.255 gateway 192.168.2.1 wpa-ssid "belkin.544" wpa-psk "yourwifipassword"

You will have to change your password and will more than likely have another ssid. Once you add that to your interfaces file type “ifconfig wlan0 down” then “ifconfig wlan0 up” then see if your wifi is actually connected. The easiest way I can think of is to ping the static ip you are assigning to the wifi device from the computer your using, or you can unplug the ethernet and try and ping something on your network.

If you have issues you should search the internet. All of this is basic wifi setup steps on any linux distro.

GPIO

In order to turn the motors on and off or left and right you will need control of your gpio. I used webiopi the installation instructions can be found on their wiki as well as how to install it as a service that will start on boot.

https://code.google.com/p/webiopi/wiki/INSTALL

It was really easy to install this portion so I wont go into detail on it.

Putting it together

So we have a webserver listening on one port and gpio control listening on another port. I did not know if I should make a page with the webstream and put it in the webiopi www folder or a page with gpio and put it in the mjpg_Streamer www directory. I think I tried both and the one that worked best was putting my test.html in the directory of webiopi. I havent tested this a whole bunch but I know that way works.

So create a new page in /usr/share/webiopi/htdocs and then you can begin working on your remote control page. For the stream itself it is too easy, a one liner

<img width=”320″ height=”240″ src=”http://192.168.2.86:8081/?action=stream”>

The gpio was a little more finicky. First I could get NOTHING to work until I put the webiopi().refreshGPIO(true); at the beginning of my webiopi().ready function. I dont know why and it is not stated on their page.

Here is the full code of my test.html. I have to switch both motors at the same time since its a tracked vehicle.

pi@raspberrypi:/usr/share/webiopi/htdocs$ cat test.html

<!DOCTYPE html PUBLIC "-//W3C//DTD XHTML 1.1//EN"

"http://www.w3.org/TR/xhtml11/DTD/xhtml11.dtd">

<html xmlns="http://www.w3.org/1999/xhtml" xml:lang="en">

<head>

<title>zBotPiTest</title>

<meta http-equiv="content-type" content="text/html; charset=iso-8859-1" />

<script type="text/javascript">

/* Copyright (C) 2013 Michael McCarty http://www.zonerobotics.com

*/

</script>

<body onload="createImageLayer();">

<img width="320" height="240" src="http://192.168.2.86:8081/?action=stream"><br/>

<script type="text/javascript" src="/webiopi.js"></script>

<script type="text/javascript">

function makeMUMDButton(id,label)

{

var button = $('<button type="button" class="Default">');

button.attr("id", id);

button.text(label);

button.bind("mousedown", mousedown(id));

button.bind("mouseup", mouseup(id));

return button;

}

webiopi().ready(function()

{

webiopi().refreshGPIO(true);

var content, button;

content = $("#content");

button = webiopi().createGPIOButton(21,"21");content.append(button);

button = webiopi().createGPIOButton(22,"22");content.append(button);

button = webiopi().createGPIOButton(10,"10");content.append(button);

button = webiopi().createGPIOButton(9,"9");content.append(button);

button = webiopi().createGPIOButton(11,"11");content.append(button);

}

);

function mousedown(dir){

switch(dir)

{

case 1:

webiopi().digitalWrite(0,1);

webiopi().digitalWrite(4,1);

break;

case 2:

webiopi().digitalWrite(1,1);

webiopi().digitalWrite(17,1);

break;

case 3:

mouseup(0);

webiopi().digitalWrite(1,1);

webiopi().digitalWrite(4,1);

break;

case 4:

mouseup(0); // all off

webiopi().digitalWrite(0,1);

webiopi().digitalWrite(17,1);

break;

default:

mouseup(0); // just stop

}

}

// this will turn all motors off !

function mouseup(){

webiopi().digitalWrite(0,0);

webiopi().digitalWrite(1,0);

webiopi().digitalWrite(4,0);

webiopi().digitalWrite(17,0);

}

</script>

<div id="content" align="left"></div>

<button type="button" class="Default" onmousedown="mousedown(1);" onmouseup="mouseup(1);" id="1" label="f">f</button>

<button type="button" class="Default" onmousedown="mousedown(2);" onmouseup="mouseup(2);" id="2" label="f">b</button>

<button type="button" class="Default" onmousedown="mousedown(3);" onmouseup="mouseup(3);" id="3" label="f">l</button>

<button type="button" class="Default" onmousedown="mousedown(4);" onmouseup="mouseup(4);" id="4" label="f">r</button>

</body>

</html>

I didnt use the webiopi wrappers for creating functions because they did not seem to work with arguments and I would rather just pass an argument to my mouseup and mousedown instead of creating a different function for each button.

Wrap Up

The other things you will note is when you start up the gpio are inputs and could be in a bad state. You cant wait for your webpage to load before fixing them. Fortunatley webiopi has a way of setting them up on start in the config file. You can read about that here

https://code.google.com/p/webiopi/wiki/CONFIGURATION#GPIO_setup

Apart from that my bot will run one side when I first start up for a few seconds, and when the battery dies it remains in whatever state it was in, even if that state was going forward. To fix the first part I plan to implement a circuit to not activate my motor drivers until a timer has expired. The second problem I also plan on solving with a circuit so that when battery gets close to low the pi is notified and it can set gpio and notify the user before shutting off.

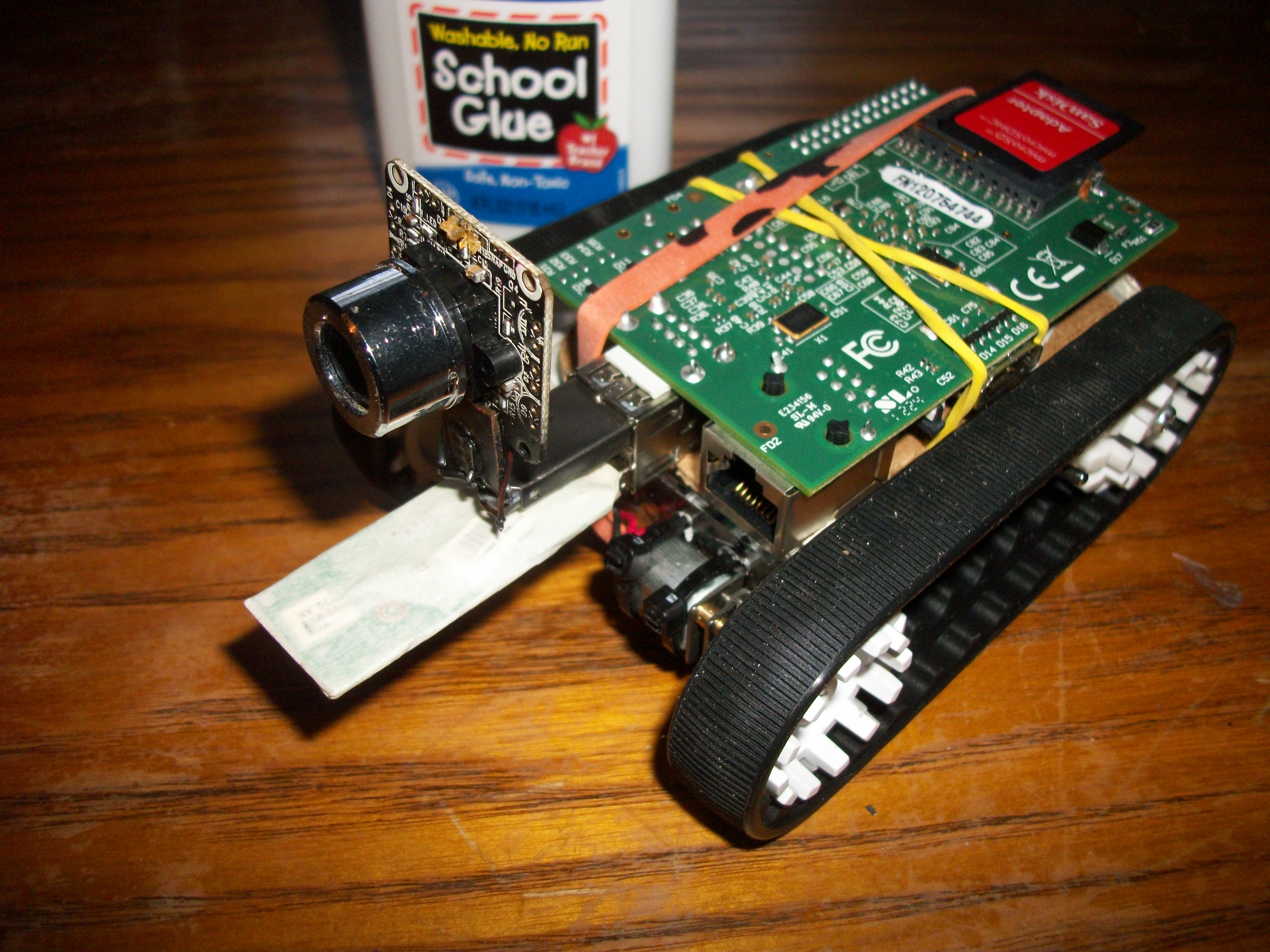

Hardware

I’m using a lm7805 that is being driven by two cr123 lithium batteries. I can drive around for about 30 minutes before my batteries die. It does not take long to recharge them and I have an extra pair that is always charging. The batteries themselves are very lightweight and are only about $1 each on ebay. There is a big cap on the 5v power side of the 7805 in case there is a dip in current during motor drive, this way the circuit does not loose power when we put a lot of current into the motors.

My motor drivers are TA7291S/SG because thats what I have around. I soldered them dead bug style to a female header. This allows me to have motor drivers and power circuitry stay with the body and simply plug into the pi gpio header.

I’m using Pololu 30T track set http://www.pololu.com/catalog/product/1416 and some micro motors I got off ebay a while ago. The case was built from plexiglass from a design I made years ago.

Update Speed fps issues !

I was going crazy trying to figure out why if I have usb 2.0 which runs at “480 Mbit/s (effective throughput up to 35 MB/s or 280 Mbit/s)” why the hell does my framerate drop to almost nothing when I use 320×240. Then it occurred to me even though whats going to the webpage is pretty small since my camera does not have jpeg compression everything going through the usb port is raw, and the usb port is shared with the wifi. So, those two devices are quickly going to max out the bandwidth.

Just a quick math note, the raw data going though my port is

320x240x2= 153600 at 10fps = 1536000 which is 1.5MB/s ….

Ya I take all that back, the usb should not be the bottleneck. It might be the two devices on the same port. Just as a test I used a raw usbcam on my normal pc and was getting realtime at 640×480. So I dont know, I’ll try and test a cam that supports h.264 or mjpg streaming once I get one. For now looks like low resolution and 150Kbs will have to do. Maybe the “Raspberry PI CSI” camera will solve all of this ?

i’am a dummy for raspberry pi, but i want to build the exact same robot as of your, i rallly appreciate your work.So i would kindly like to request you to teach me how to make this robot

thanks heaps for sharing all of this… just a question – how do you hook up the motor drivers to the rasberry pi board? and will any motor driver work?

Cheers

Paul

You cant drive the motors directly from the Rpi. I used a toshiba hbridge, which basically takes low level signals from the pi at 3.3v and allows them to control larger voltage/amps coming from your battery. If you want the *easiest* way to control a motor and you only want one direction (cant go backwards) just hook up a mosfet on one side of your motor and control the gate with the rpi gpio.

Google mosfet as a switch. You want a pfet if your controlling from your motor to + a nfet if you are controlling your motors path to ground.

Good luck

About frame rate: using mjpg streamer makes sense if you have an UVC compatible camera that does mjpg compression directly in the camera. That typically means that you have a few resolusions supported by the camera that works with the onboard compression, the smallest resolutions typically VGA i.e.640×480, and you also have to select the right compression format mayby mpg but you can trail and error on the setup in mjpg streamer until it works optimally. then you get a 2-4% load on the Raspbeyy pi with handling the streaming alone. To circumvent USB bottlenecks all together, the Raspberry camera is the way to go. It supports H264 directly and you can get 720 @ 30 fps on even smaller bandwith than Mjpg Streamer uses. There are guides on setting up a Ngix server streamer on the Raspian, serving the video feed into a frame on your GPIO controller page or having an embedded VLC player window.

Don’t ask for a more detailed writeup as I did this two years ago, admittaly not as slick a control as yours but SSH’ing from a terminal window for movement of a Roomba with continous rotation servo control of it’s wheel motors.

Cheres

Thanks for the comment. I’ve learned a lot since I made that article. I too thought the rpi camera was going to be the best thing ever and was ready to use it. It costs as much as the rpi itself (modelA) and the latency is awful. Yes it does high res video but it does not work well for the real time streaming. I think I made another article about it.

I’ve long since then moved back to an embedded solution that cost much less and goes much faster. My goal is to make a driveable robot with real time like latency.

Try using gstremer for video feed! I had the same problem as you have and after trying different methods I found gstreamer to be the best I am getting a negligible lag of around 200ms

Try using gstremer for video feed! I had the same problem as you have and after trying different methods I found gstreamer to be the best I am getting a neglegible la

Hey love the work you did on this. Hope you’re making progress on developing a ROS package for it. I’m trying to get something just like this working, but I’m using an RPi 2 with Ubuntu 14.04 and have had no luck getting Weaved running on it. You mentioned it might work with any ‘debian-based’ distro, so I was hoping someone might have had better luck. Great webpage too by the way. Thanks for any help you can give.

-Luke